Interviews

Can an irresistibly lovable robot be produced?

Yoshihiro Sejima

The research interest of Yoshihiro Sejima (Associate Professor, Faculty of Informatics at Kansai University) is gaze communication and he is trying to create a robot with a twinkle in its eye

Interviewer:Satoshi Endo、Author:Junko Kuboki、Translated by:FUJIYAMA, Co., Ltd.、Photography by:addingdesign LLC.

Teary-eyed interface.

Internal emotions are indicated through pupil response

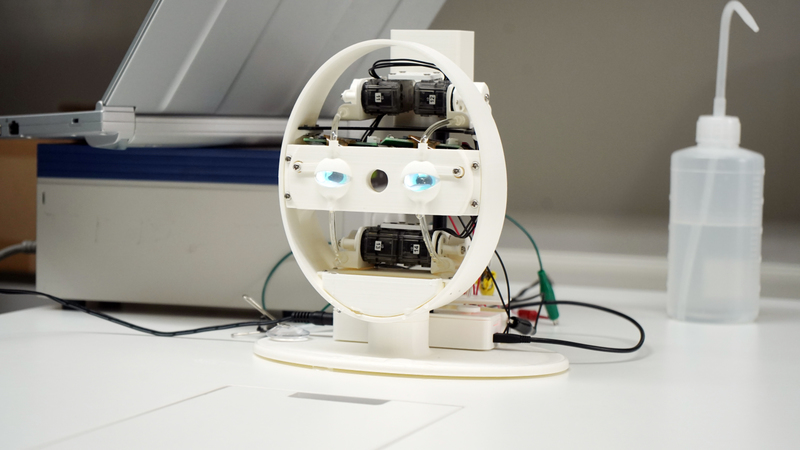

A black circle surrounded by blue appears on a hemispherical display the size of a soccer ball. On its own, this device appears simply to be an objet d’art - but - put two of them together, and voila! You see that they are eyes!

Sejima’s “pupil response interface” is designed to simulate human eyes. The eye is represented by a hemispherical display, with the black circle being the pupil and the blue border representing the iris.

According to Sejima, humans interact with others in diverse ways, but what is less visible and important is the pupil change. Kansai University, where he works.

Just like a human, the interface responds to voice, with the pupils dilating and contracting and moving around to match the line of sight of the person facing it. The irises are blue to produce more contrast to the eye movements, unlike those of Japanese people, which tend to be brown.

Sejima says, “My area of research is ‘how to make human ‘like’ a robot.’ It’s a bit like R&D on human-robot interaction, but I’m creating and conducting experiments on systems and robots while wondering if ‘the day will come when I’ll like a robot more than my real wife.’”

But what does it mean to “like” in the first place? Our attachments, affinities, and affections seem to occur naturally, but the complicated human mind must be at work.

Humans connect with others by communicating with each other. This communication is achieved by our voices (language), faces (expressions), and body gestures (movements), but the simplest and most direct way is the change in the size of our pupils. What’s more, we cannot consciously control pupil response as they are actuated by the autonomic nervous system, just like our breathing. Things like whether or not we are interested, stressed or not, comfortable, irritated, nervous, afraid, etc.--all our internal emotions are communicated directly by how wide our pupils are dilated.

Sejima used a minimal design for this “pupil response interface” and stripped off unnecessary structures of the organ to reproduce pupil response in an easy-to-understand way and thus verify them. Their size is equivalent to 10 times a normal human eye.

Sejima has used them to explore the relationship between the gaze and the pupils in communication. He also added a function that changes the direction of the gaze from eye contact (engaged gaze), as well as an “independent pupil reaction control” function that opens/closes the aperture via servo motors, and he repeated his experiments, adding one function at a time.

Conducting an experiment using the pupil responses interface playing ‘Acchi, Muite, Hoi’, a Japanese children's game. Even if the pupil moves randomly, human begin to speak to the robot seriously.

Then there is a pet robot. Sejima embedded eyes with responsive pupils and proximity sensors where the eyes of a teddy bear would be. It was cute in its original form, but now its eyes dilate to 1.5 times larger when someone approaches. In effect, it’s as if the teddy bear expects you to “love it.” When describing their sense in using it, people said “it got cuter,” “I felt closer to it,” and “I wanted to touch it.”

Look to the left when speaking to a group!

Sejima started research in this field after studying under professor Tomio Watanabe (Faculty of Computer Science and Systems Engineering, Okayama Prefectural University). Professor Watanabe's area of research is communication between mother and baby and he is interested in the phenomenon of synchrony in communication called entrainment, which non-verbal behaviors are synchronized unconsciously. He was exploring what engaged mothers to their babies, which cannot use language and culture, and whether they are synchronized with each other via embodied rhythms.

While working with Watanabe, Sejima analyzed the movement of the head, which produces embodied rhythms and eye movements, which generate one’s gaze, and he studied the communication between humans, which is modulated by the synchronization of embodied rhythms and their gazes.

Since that time, Sejima has been involved in a number of experiments, the most unique of which is “group gaze.” In that experiment, he analyzed the relationship between audience response and eye movements, such as gaze duration, meeting one's gaze and looking away.

During the experiment, a lecturer split her gaze at ratios of 60% to the center, 30% to the left and 13% to the right (the difference is in the gaze duration, even if the number of times looking is the same). This was set as the CG and the ratios of looking at groups varied, which resulted in audience comments like “I prefer it if the gaze ratio is not balanced” and “increasing the ratio to the left makes me feel the gaze and I feel included.”

In other words, a speech is more effective if attention is given to the left side. By contrast, the audience will feel “unenthusiastic” if the speaker looks at them evenly. Why do you suppose this is?

CG video for the "Group Gaze" experiment.

“We don't know the reason behind it. It may be related to physical habits, such as the dominant hand or dominant eye, or to culture, such as proper use of a stage or traffic laws... In any event, the relationship is not clearly understood. In this way, when things we don’t really understand are put into an experiment, it is common in researching this field that we can clarify it to the point of saying “this is the relationship.”

“The reaction to this experiment was very good when I presented it at an international conference. Research into gestures and body language is more advanced overseas and human seem more sensitive about the eyes than in Japan. By contrast, Japan's culture is such that human do not stare at each other for very long. Even so, it can be assumed that ‘if it is this prominent (in an experiment) with Japanese people, then it must be all the more so overseas.’ Such a difference in senses makes the gaze communication approach all the more interesting.”

Dr. Sejima's research has progressed from communication based on a mother and child’s embodied rhythm to focusing on the pupil response.

Does a twinkle in the eye conquer the heart?

Sejima is starting to work on a “twinkling eye interface.”

“When the eyes are wide open, they say ‘I’m looking at you!’ This produces feelings like relief and empathy and it builds trust. My experiments so far have shown that enthusiasm is conveyed when pupils change in response to someone’s voice. Next, I would like to identify what elements result in humans “absolutely loving something/one.”

In the future, he says he wants to pursue the twinkling of human eyes. What is it that is so attractive about someone whose eyes are twinkling? If we can understand that, we’ll be one step closer to identifying the mechanism by which humans attract and come to like each other.

“I failed previously, as using artificial light to produce the twinkling of eyes doesn’t work. Human eyes don’t emit light, so it ended up seeming fake. For that reason, I’m trying to make them twinkle via tears. I incorporated human lacrimal structures (lacrimal glands, lacrimal sac, tear punctum) and used servo motors to control water and make them tear up. If you reproduce moist, attractive eyes, how much will people love them?”

Sejima designed the sizes of the face and eyeballs to be the same as a human’s. The eyeball is made of a clear hemisphere with a small display, and gives an illusion with a high degree of reflectivity by superimposing human shadow on the eyes. The pupils dilate according to the distance to the person facing it, making it seem like it is interested in the other person.

A liquid resembling tears is poured into the tray at the back of the head. Opening the valve causes the ‘tears’ to overflow. Experimental device for pupil interface.

The tears of the robot overflow from the tear ducts, just like human eyes.

I wonder, will humans say “here’s to your eyes!” when this robot is complete? I’m curious about the goal Sejima set out when he said: “My ultimate goal is a robot that is more attractive than my wife.”

“My wife said, ‘if you think you can make it, go for it.’ (he laughs). When I compare it with my wife, the criteria is whether it is a mechanism that ‘makes me want a strong connection,’ and that’s my serious intent and what I want to achieve.”

“Humans communicate and connect with each other. And humans have a desire to be connected with others. Systems and robots can reproduce such desires an infinite number of times while altering the situation. The ‘differences’ between humans and machines are also revealed in this process. If we examine the differences thus revealed, again and again, we will bring to light the ‘mechanisms that link our minds.’”

Dr. Sejima, who bases his research on human-human communication and exploring the mind and preferences, is impressed that its eyes were shining so brilliantly.

Yoshihiro Sejima

Yoshihiro Sejima was born in 1982. He received Ph.D. degrees from Okayama Prefectural University, in 2010. He is currently an associate professor at Kansai University. His research interests include human-robot interaction and social robotics. He received ‘KAZUO TANIE AWARD (Most Outstanding Research Award)’ in 2015 from IEEE RO-MAN2015.

Related URL:

http://www2.itc.kansai-u.ac.jp/~sejima/